Quickly scaling services up (and down) is a major advantage of Microservice Architectures. Fortunately, with a Container Orchestrator such as DC/OS scaling is easy. Unfortunately, many containerized applications have a resource-intensive startup phase before they can serve real loads, which can slow down the scaling process. For example, JVM-based applications have to start the JVM before doing any useful work. Frameworks such as Spring Boot can slow down

startup up times even more. A

sample Spring Boot application takes over 20 seconds to start: Started Application in 18.692 seconds (JVM running for 20.006).

In DC/OS 1.9 and earlier, applications could use more resources than they were allocated during this startup period. When DC/OS enabled the

CFS CPU isolation (with the release of 1.10) users started to noice their startup times getting longer.

So wouldn't it be nice if we could give an application some extra resources (e.g., CPU and Memory) while it was starting up and then take them back during the runtime of the task? Unfortunately, container schedulers can't allocate variable resources over the lifetime of a task/container. This means that in order to scale quickly, tasks with high startup requirements need a lot of extra resources over their entire, potentially long runtimes, which in turn results in lower cluster utilization.

DC/OS Pods were originally designed for task colocation, but here we will take advantage of the fact that ressouce accounting is done on per pod level to alocate extra resources for a Spring Boot application during its startup period. If you want to learn more about Pods and especially how the Mesos nested containers are different from Kubernetes concept of Pods, we highly recommend this

MesosCon Keynote.

So, the idea is to deploy our JVM application not as a single task, but inside a Pod. The Pod allows us to deploy a dummy startup container/task next to our actual Spring Boot container/task. This startup task is doing no work (e.g., sleep 100) and its only function is to allocate the extra resources that the application needs during startup. To make this more concrete, let's take a look at the simplified Pod definition below (the full definition is

here):

The green box defines our springboot-app, while the orange box defines the startup task.

How will the startup task impact the resources available to the Spring Boot task, and what will happen after the startup task has finished?

Because DC/OS enforces resource isolation on a Pod level, the two containers in the Pod share a total of 2 CPUs and 1024 MB memory*. Since the startup task is running a sleep 100 it will not use many resources, leaving most to the Spring Boot task. After 100 seconds the startup task will be considered complete and won't restart while the springboot-app container remains running, which we can confirm by looking at the DC/OS UI:

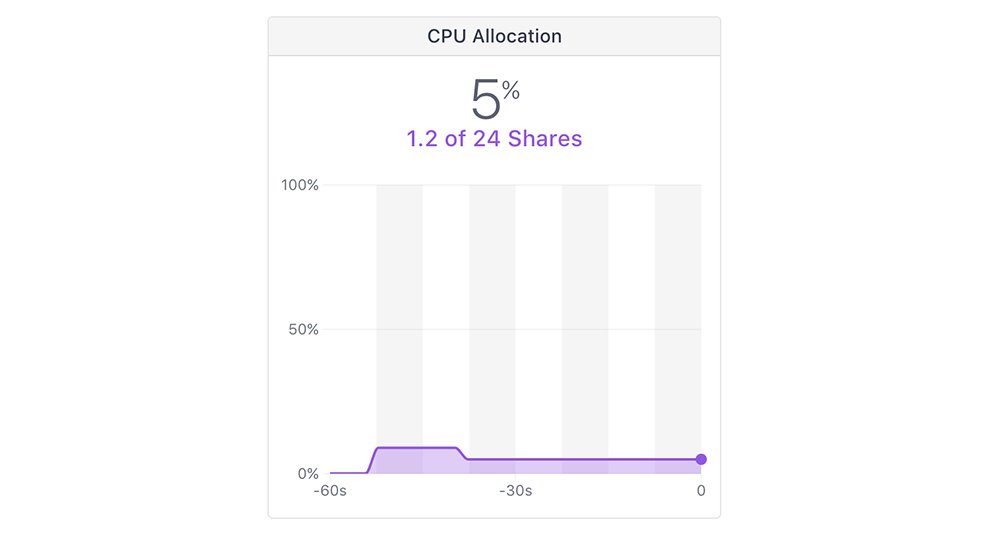

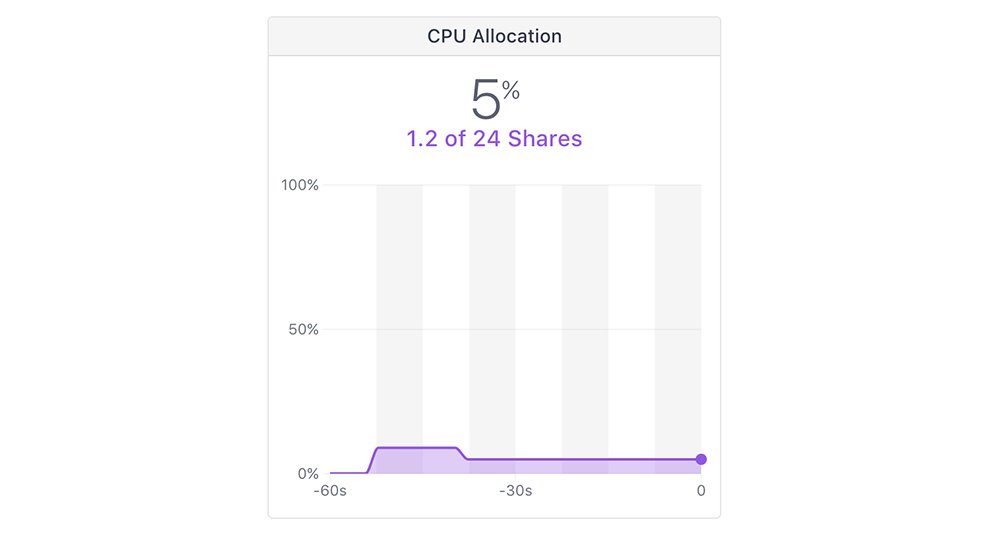

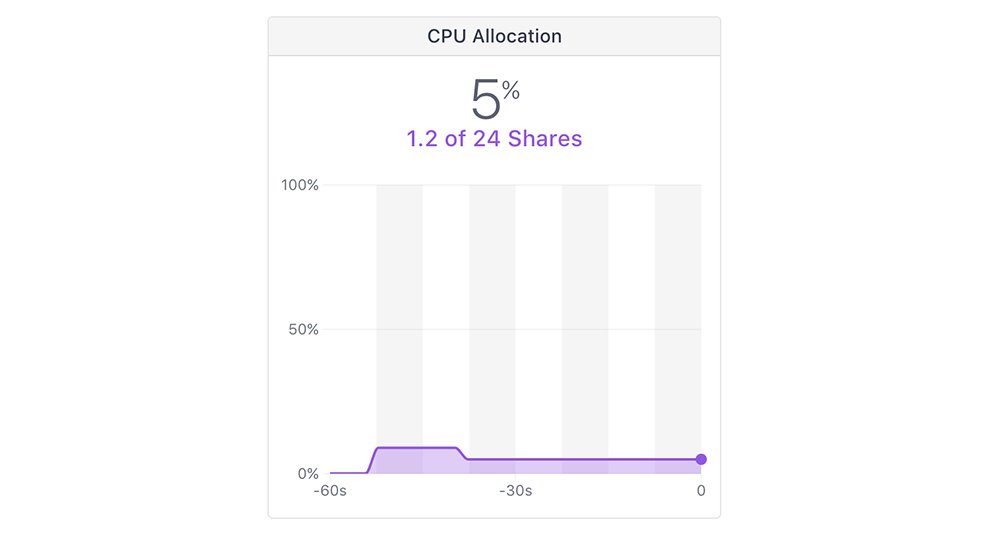

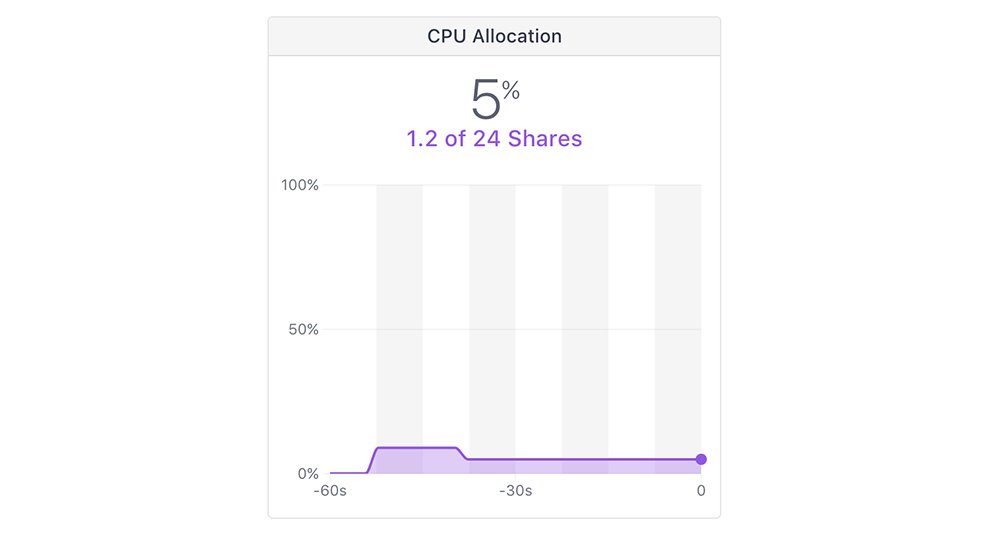

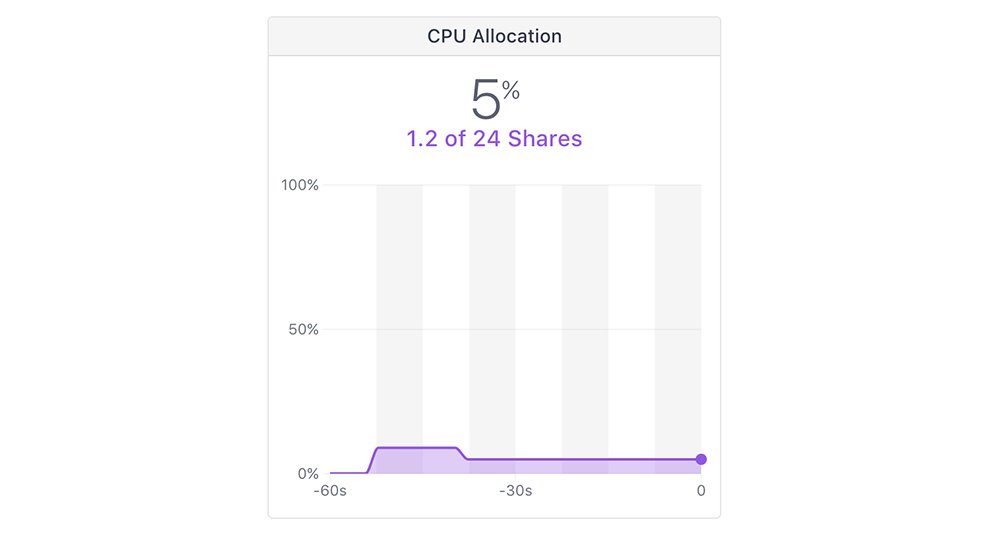

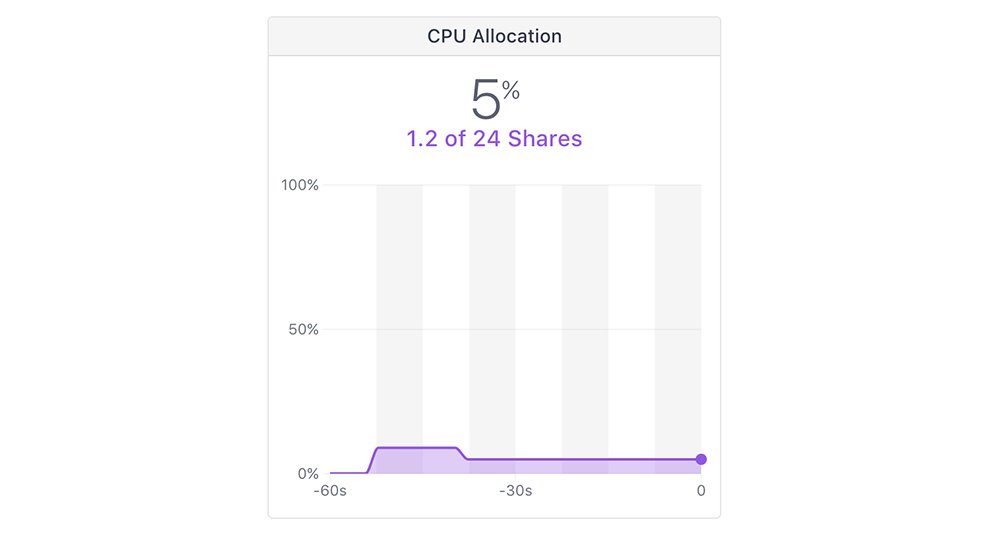

This means that the startup task's resource allocation will be freed up again, which we can also confirm when looking at the DC/OS UI.

This is the exact behavior we were looking for; some extra resources are available for faster startup times and a lower, constant resource allocation is available during the rest of the lifecycle. Note, that this is also useful for any applications that might require a lot of resources during initialization and then much fewer during the remainder of their lifecycles.

So how much did that improve the startup time? The Spring Boot application now starts in less than 10 seconds: Started Application in 8.688 seconds (JVM running for 9.346). So we cut the startup time to less than half!

We can improve the startup task in a number of ways. For example we could replace sleep 100 with an app that monitors whether the Spring Boot application has successfully started and then completes. The disadvantage to this method is that we'd need more logic (potentially even a real docker image), which would, of course, also consume resources itself. We found while testing different solutions that sleep X works surprising will when you adjust X to your specific application.

Learn more about DC/OS

Download this eBook to learn how DC/OS helps you configure and automate interdependent applications across clusters of physical hardware, virtual machines, and public clouds.