For more than five years, DC/OS has enabled some of the largest, most sophisticated enterprises in the world to achieve unparalleled levels of efficiency, reliability, and scalability from their IT infrastructure. But now it is time to pass the torch to a new generation of technology: the D2iQ Kubernetes Platform (DKP). Why? Kubernetes has now achieved a level of capability that only DC/OS could formerly provide and is now evolving and improving far faster (as is true of its supporting ecosystem). That’s why we have chosen to sunset DC/OS, with an end-of-life date of October 31, 2021. With DKP, our customers get the same benefits provided by DC/OS and more, as well as access to the most impressive pace of innovation the technology world has ever seen. This was not an easy decision to make, but we are dedicated to enabling our customers to accelerate their digital transformations, so they can increase the velocity and responsiveness of their organizations to an ever-more challenging future. And the best way to do that right now is with DKP.

Executive Summary

Synthetic benchmarks of some individual DC/OS components (Mesos, Marathon, Admin Router, Identity and Access Management service, as well as dcos-net) confirmed that mitigations for Meltdown and Spectre vulnerabilities impose certain performance penalty. In the vast majority of cases those penalties are negligible and should not have a noticeable impact on performance. Having said that, it is advisable to make sure that machines running DC/OS Masters are not running at (or close to) 100% CPU load and have at least 15-20% of CPU slack available.

The end-to-end tests of DC/OS didn't reveal any measurable performance degradation from the end user's point of view.

Last but not least, we would like to stress that the performance impact experienced by users is in general heavily dependent on actual workloads that are being run. We strongly advise users running performance-sensitive workloads to perform an independent assessment of the impact that their workloads are going to incur after patching their systems.

Introduction

This advisory discusses impact of Spectre and Meltdown vulnerabilities disclosed by multiple security researchers recently. Details of the vulnerabilities as well as references to the original research can be found at https://meltdownattack.com/.

Operating system as well as hardware vendors have recently released patches to address reported vulnerabilities. Below we explain the impact that these patches have on DC/OS performance.

The tests were performed on DC/OS 1.10.4 (the latest stable release as of 01/18/2018) using Haswell microarchitecture family of Intel CPUs.

Meltdown and Spectre Mitigations' Impact on Individual Components

Mesos

The work of Mesos master nodes involves responding to events from inside the cluster, responding to external requests, and management and persistence of relevant cluster state. Typical workloads are bound by CPU or memory throughput; some I/O bound tasks are also performed but are less common in the typical operation of a Mesos cluster.

We conducted a number of micro-benchmarks of the Mesos master performance for clusters with up to 1000 agents and 100 frameworks. In CPU and memory bound operations such as resource handling, or serialization of data in scheduler reconciliation, we see increases of the wall clock duration of up to 10% for some benchmark workloads. We see changes of similar magnitude for I/O bound workloads such as persisting or updating the master state to its registry. None of these effects, however, were observed to be having measurable impact on the overall performance of a DC/OS cluster.

DC/OS core components

In DC/OS Enterprise, the Identity and Access Management service (IAM) responds to permission lookup requests and enables individual DC/OS components to make authorization decisions. A small IAM response latency is important for keeping the DC/OS cluster responsive to user-triggered actions. We conducted synthetic benchmarks and found that the IAM's throughput as well as latency distribution does not change significantly after deploying the Spectre/Meltdown mitigation.

Admin Router is an HTTP proxy running on DC/OS master nodes as well as agent nodes. It contains custom logic for e.g. authentication and authorization, and is generally responsible for routing cluster-internal as well as external HTTP requests. Under synthetic stress we found that the endpoints that we expect to be hit most in busy DC/OS clusters are not noticeably affected by the Spectre/Meltdown mitigation (again, we tested for throughput as well as latency distribution).

Networking components

The DC/OS network stack provides three different services. A DNS based service discovery mechanism (spartan/mesos-dns), a layer 4 load-balancer (minuteman), which uses the kernel IPVS module as its dataplane, and a layer 7 load-balancer (marathon-lb) which is based on HAProxy.

In order to determine the impact of the Spectre/Meltdown patches we conducted throughput tests for our layer 4 load-balancer and layer 7 load-balancer, as well as query rate tests for our DNS based service discovery mechanism.

Our load-balancer throughput tests show that while the application throughput itself may be significantly impacted by these patches (we have seen throughput drops of as much as 40% for certain applications) the overhead associated with layer 7 or layer 4 load-balancing doesn't change. That is, we determined that the load-balancing service provided by DC/OS doesn't contribute significantly to the performance hit that the applications themselves may incur.

In addition to the above tests we also ran DNS query rate tests against our DNS service. While we did not observe DNS query processing rate drop on patched systems, we did see a 10-15% increase in CPU utilization. It is important to note that we intentionally stressed the system by increasing the query rate to 500 queries per second in order to observe these effects. We do not expect to observe any performance impact on CPU utilization under normal operating conditions where the DNS query rate is expected to be considerably lower.

End-to-End Testing

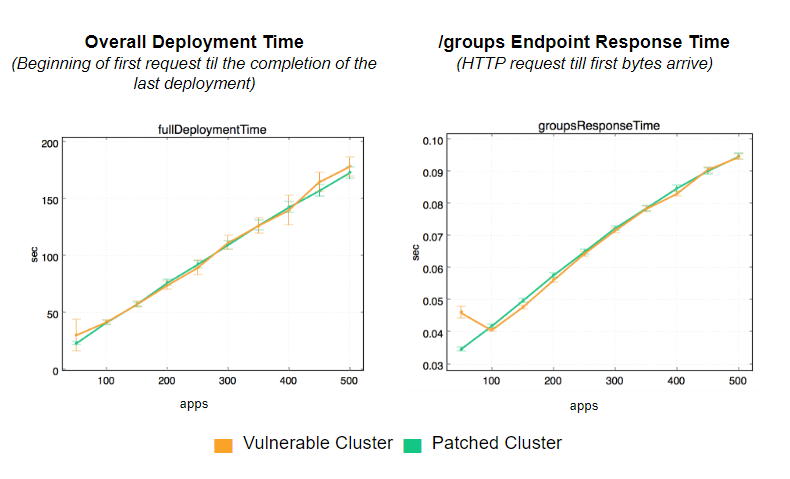

We measured the performance of the overall DC/OS system in an integrated end-to-end test. The test reproduces an experience of a user launching 50, 150, 200 ... 500 applications in a burst. The test measures how long it takes to get those applications deployed. In addition to that, we also measure the response of the `/groups` endpoint which is consumed by the DC/OS UI.

The results did not show any measurable performance impact on the user experience:

We are going to continue testing individual DC/OS components as well as various end-to-end usage scenarios and will keep updating this document as more results become available.

Learn More About Cloud Native Infrastructure

Cloud Native Infrastructure is more than servers, network, and storage in the cloud—it is as much about operational hygiene as it is about elasticity and scalability. This free eBook excerpt reveals the hard-earned lessons on architecting infrastructure from companies such as Google, Amazon, and Netflix drawing inspiration from projects adopted by the Cloud Native Computing Foundation (CNCF). It also provides examples of patterns seen in existing tools such as Kubernetes.