Apache Mesos helps Indeed eliminate operational bottlenecks and empower teams to more fully own their products

Independent teams are vital to Indeed development. With a rapidly growing engineering organization, we strive to reduce the number of team dependencies. At Indeed, we let teams manage their own deployment infrastructure. This benefits velocity, quality, and architectural scalability. Apache Mesos helps us eliminate operational bottlenecks and empower teams to more fully own their products.

The operations bottleneck

During Indeed's early years, we manually provisioned and configured applications. For every new application, we sent a request to the operations team, which would then attempt to find a server with enough capacity to run the new application. If none could be found, operations would spin up new virtual machines or order additional servers. Provisioning a new application could take up to two months.

Subsequent deployments were faster and more self-service, but that first provisioning step was a definite problem. This led to developers optimizing for their own velocity at the expense of application design. Applications became bloated monoliths, as it was easier to bolt on new services than to undertake the time-consuming process of creating new applications. This didn't scale. Something had to change.

Enter Mesos

Around three years ago, Indeed began using Mesos, which gave teams the freedom to configure, deploy, and monitor their applications themselves. Today, if an application needs more CPU, memory, or disk, the team adds it. The primary benefits of this are:

No gatekeepers. This increases velocity and the propensity for scalable architecture.

Teams know the performance profile of their application. Because teams must specify their own CPU, memory, and disk numbers, they become familiar with expected performance, troubleshooting, and areas for improvement.

Increased reliability. When a server goes down, applications are restarted elsewhere. Engineers no longer have to manage individual instances.

Indeed's Mesos ecosystem

Mesos alone is not enough to reliably run applications. Our Mesos ecosystem incorporates many open source projects and in-house applications built to create a seamless experience for teams.

Marathon for daemons

We use Marathon to run daemons. Most Indeed developers are unaware of that, though. Since Indeed runs in ten data centers and Marathon can only run in one, we wrote a system called Marvin that coordinates deployment across all data centers. Developers independently specify their resource requirements, the version of the application to run, the number of instances, and in which data centers to run. An agent runs in each data center that compares the defined configuration with Marathon's configuration. If they don't match, the agent works directly with Marathon to scale up/scale down instances or initiate a new deployment.

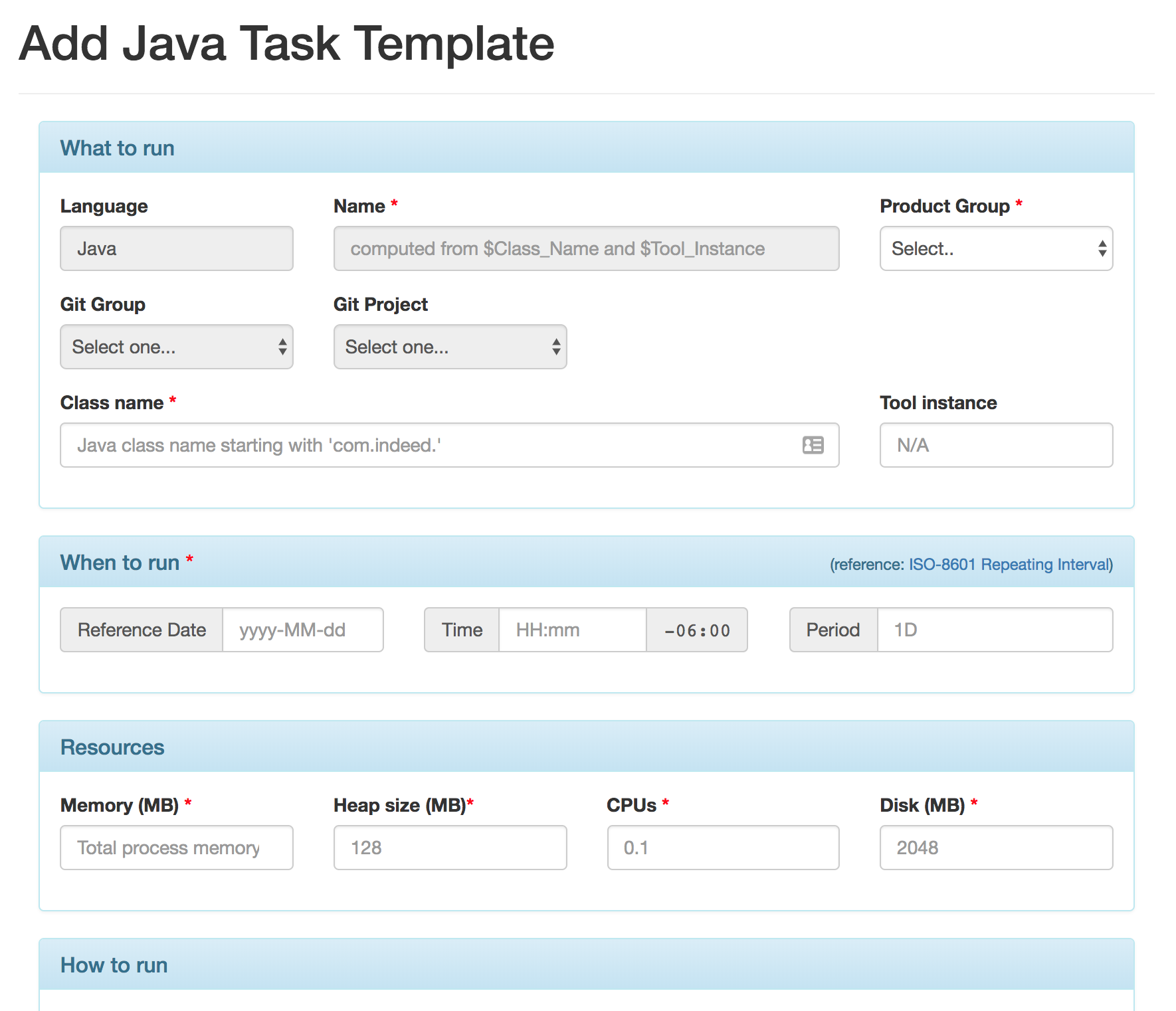

Our internal tool for batch and one-off jobs

We also run a large number of batch or "one-off" jobs. Marathon is not appropriate for this use case, so we built Orc, a Mesos Framework similar to Marathon that handles configuring and scheduling these jobs—tasks that previously fell on the operations team. Because we built the tool, we can make a number of optimizations, such as last-host affinity. This interacts nicely with our Resilient Artifact Distribution system so that jobs run close to the data they require. Developers can also schedule their jobs to run whenever they need to.

HAProxy for load balancing

With Marathon constantly bringing up new instances on different servers and different ports, we needed an easy way to address these instances. We use HAProxy as a reverse proxy due to its well-known performance characteristics. We wrote a small application that discovers where daemons are running and generates HAProxy configurations to match. When the configuration changes, we try to dynamically update HAProxy using its Runtime API. If that's not possible, we restart HAProxy using a seamless reload mechanism that ensures that no packets or requests are lost.

Vault for configuration

Lastly, we required a robust way to configure our applications. Most applications at Indeed are configured via a simple, flat properties file. Prior to Mesos, we used Puppet to disseminate properties files to each data center, but this wasn't self-service and there was a high degree of lag. We wanted to make it quick and easy for teams to securely configure their applications themselves, so we designed a system built around Vault, HashiCorp's product for managing secrets. Before an application runs, we generate a short-lived token for retrieving the properties. We built a small Marathon plugin that does this for Marvin daemons, and we modified Orc to do this for batch jobs.

Result: Independent teams and scalable applications

All of these changes led to a 14% decrease in deployment time. Additionally, it reduced provisioning time from months to minutes and allowed our development teams to take more responsibility for their applications.

We've seen a sharp fall in configuration and deployment tickets, and we've reduced our average configuration ticket resolution time from 15.6 to 3.4 days. As a result, the operations team can focus on more pressing initiatives, like re-allocating resources to create a site reliability engineering practice.

We are now working toward Docker containerized deployments on Mesos. Developers will eventually roll their own Docker images, automatically scan them for vulnerabilities, and easily deploy their containerized apps on our cloud infrastructure. With these upcoming advances, we will continue enabling new capabilities on top of Mesos, allowing our engineering teams to independently create scalable applications.

This content was also posted to the Indeed Engineering Blog and Medium.