The age of highly accessible, open source machine learning tools is upon us. No longer niche, everyone — from data scientists to Japanese cucumber farmers — is using machine-learning technologies. But what is machine learning?

Machine learning is exactly what it sounds like — software that can learn to solve a problem. Using large sets of data, an

algorithm can be trained to understand that data. For example, if given enough data about key attributes which influence housing prices, the algorithm should be able to predict the price of a house based on its various properties.

Today on

STACK That, we're talking to Rajat Monga, Director of Engineering of TensorFlow, one of the companies crusading to make machine learning available to everyone.

TensorFlow is one of the most popular machine learning libraries currently available, and for good reason. It's fast, highly readable, supports many types of distributions, and is incredibly well supported by Google. Since its open sourcing in 2015, it has become one of the most forked projects on GitHub and has over 1000 active community contributors.

"To teach machines to learn, you need programs to do the trainings. TensorFlow is one of the tools that helps that," says Rajat Monga, TensorFlow's Director of Engineering.

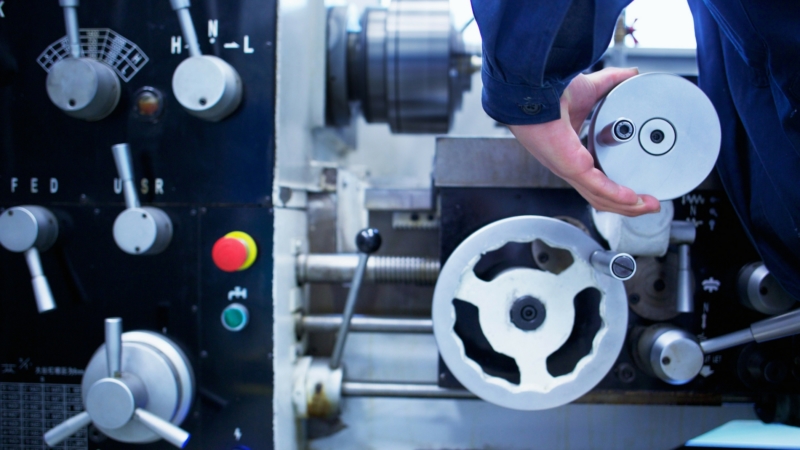

There's no better example of TensorFlow's ease of use than the story of Japanese engineer Makoto Saike. Makoto was able to

build a cucumber sorting assembly line with TensorFlow all by himself. Makoto used TensorFlow to train an algorithm which recognized the various types of cucumbers grown on his parents' farm and sorted them accordingly. This process massively reduced the amount of labor for his mother, who previously would have to spend all day sorting the cucumbers manually. With this new system in place, his parents were able to focus their efforts on growing more and better vegetables.

As long as you have the data, businesses can start using TensorFlow to identify patterns and recognize trends. Insurance companies can predict the risk factor of their customers. An e-commerce company might use it to predict customer churn or fraudulent transactions. The sky is the limit.

And it's not just farmers or small business owners who can make the most of their data using machine learning. Artificial intelligence, as a whole, enables businesses to increase efficiency and better adapt to an increasingly hybrid IT world. For example, ahead of Discover 2017 Madrid, HPE introduced the

industry's first artificial intelligence recommendation engine designed to simplify and reduce the guesswork in managing infrastructure and improve application reliability.

"HPE InfoSight marks the first time a major storage vendor has been able to predict issues and proactively resolve them before a customer is even aware of the problem," said Bill Philbin, Senior Vice President, HPE GM Storage.

Mesosphere makes

distributed TensorFlow implementations even easier with single-click deployment available via the

DC/OS service catalog. Mesosphere

DC/OS pools resources like GPU, CPU, memory, and storage across all nodes to help businesses build, deploy, and elastically scale modern applications and data services. DC/OS makes running containers, data services, and microservices easy across your own hardware and cloud instances. Running distributed TensorFlow on DC/OS helps data science teams to:

- Simplify the deployment of distributed TensorFlow: Deploying a distributed TensorFlow cluster with all of its components on any infrastructure, whether it's baremetal, virtual or public cloud is as simple as passing a JSON file to a single CLI command. Updating and tweaking parameters to fine-tune and optimize becomes trivial.

- Share infrastructure across teams: DC/OS allows multiple teams to share the infrastructure and launch multiple TensorFlow jobs while maintaining complete resource isolation. Once a TensorFlow job is done, capacity is released and made available to other teams.

- Deploy different TensorFlow versions on the same cluster: As with many DC/OS services, you can easily deploy multiple instances of a services, each with a different version, on the same cluster. This means that when a new version of TensorFlow is released, one team can take advantage of the latest features and capabilities without running the risk of breaking another team's code.

- Allocate GPUs dynamically: GPUs greatly increase the speed of deep learning models, especially during training. However, GPUs are precious resources that must be efficiently utilized. Since DC/OS automatically detects all GPUs on a cluster, GPU-based scheduling can be used to allow TensorFlow to request all or some of the GPU resources on a per job basis (similar to requesting CPU, memory, and disk resources). Once the job is complete, the GPU resources are released and made available to other jobs.

- Focus on model development, not deployment: DC/OS separates the model development from the cluster configuration by eliminating the need to manually introduce a ClusterSpec in the model code. Instead, the person deploying the TensorFlow package specifies the properties of the various workers and parameter servers they would like their model to run with, and the package generates a unique ClusterSpec for it at deploy-time. Under the hood the package finds a set of machines to run each worker / parameter server on, populates a ClusterSpec with the appropriate values, starts each parameter server and worker task, and passes it the generated ClusterSpec. The developer simply writes his code expecting this object to be populated, and the package takes care of the rest.The figure below shows a JSON snippet that can be used to deploy a TensorFlow package from the DC/OS CLI with a mix of CPU and GPU workers.

- Automate failure recovery: The TensorFlow package is written using the DC/OS SDK and leverages built-in resiliency features including automatic restart so that failed tasks effectively self-heal.

- Deploy job configuration parameters securely at runtime: The DC/OS secrets service dynamically deploys credentials and confidential configuration options to each TensorFlow instance at runtime. Operators can easily add credentials to access confidential information or specific configuration URLs without exposing them in the model code.

Learn more about how machine learning is going mainstream in this week's podcast below:

Listen and subscribe to the latest episodes of STACK That on

our podcast content hub, or with your favorite podcast app:

Our Guest

Rajat Monga, Director of Engineering at TensorFlow

Rajat Monga leads TensorFlow at the Google Brain team, powering machine learning research and products worldwide. As a founding member of the team he has been involved in co-designing and co-implementing DistBelief and more recently TensorFlow, an open source machine learning system. Prior to this role, he led teams in AdWords, built out the engineering teams and co-designed web scale crawling and content-matching infrastructure at Attributor, co-implemented and scaled eBay's search engine and designed and implemented complex server systems across a number of startups. Rajat received a B.Tech. in Electrical Engineering from Indian Institute of Technology, Delhi.

Our Hosts

Florian Leibert, Co-Founder & CEO at Mesosphere

Florian Leibert co-founded Mesosphere (now

D2iQ)in 2013 and currently serves as CEO. Since 2013, The company makes Mesosphere

DC/OS, which allows webscale and enterprise companies to quickly deliver containerized, data-intensive applications on any infrastructure. Florian is passionate about building IoT infrastructure and enjoys discussing the benefits of the modern application stack as well as how D2iQ customers are building world changing technology. He is also the main creator of Marathon, an orchestrator and scheduler that operates on top of the Mesos software. Florian Leibert drove fundraising efforts through four rounds of financing from prominent investors like Khosla Ventures and Andreessen Horowitz. In addition to his role at D2iQ, Florian Leibert serves as an investor with Away and Drift.

Prior to co-founding Mesosphere, Florian Leibert held engineering leadership positions at prominent internet sites, including Airbnb, Twitter, Ning, and Adknowledge. At age 16, he co-founded online marketplace Knaup Multimedia in Germany.

Byron Reese, CEO & Publisher at Gigaom and CEO at Knowingly

With 25 years as a successful tech entrepreneur, with multiple IPOs and exits along the way, Byron Reese is uniquely suited to comment on the transformative effect of technology on the workplace and on society at large.

As a successful serial entrepreneur and award-winning futurist, Byron balances two unique perspectives. As a futurist, he leads his audience to explore the unprecedented technological changes destined to trigger the dramatic transformation of society. And as an entrepreneur, he shares how to profit from this change while still meeting the practical realities of operating a business.

Speaking across the globe, Byron brings great enthusiasm and talent for deciphering our common destiny and unlocking business opportunities within it. Byron's keynotes and appearances include the PICNIC Festival in Amsterdam, SXSW, the TEDx Austin, Wolfram Data Summit, Spartina, and the IEEE Global Humanitarian Technology Conference, among others.

Byron is also the publisher of Gigaom. A role enhanced by 20 + years' experience building and running successful Internet and software companies. Of the five companies he either started or joined early, two went public, two were sold, and one resulted in a merger.